Insights from Fidelity International’s 2025 Australian AGM season AI governance survey

The impact of artificial intelligence (AI) is a key area of focus for Fidelity International’s stewardship team. Drawing on our experience from our Digital Ethics thematic, we are now expanding our focus to addressing risks associated with the rapid growth of data centres, as well as the effect of AI adoption on human capital. In this article, we focus on AI governance and risk management; a central theme that can enhance opportunities and mitigate risks associated with AI adoption and ultimately influence long term value creation.

Executive summary

The accelerated push to embed AI into business operations has transformed AI governance from an abstract ethical debate into a critical aspect of corporate oversight. As AI increasingly influences decision-making, workforce dynamics, and strategy implementation, companies are undergoing scrutiny related to how these technologies are designed, monitored, and controlled.

Over the course of the Australian 2025 AGM season, Fidelity International conducted a bespoke AI governance survey across 31 ASX-listed companies spanning multiple sectors and sizes. We engaged directly with boards, asking structured questions across five key dimensions: 1) Strategy and value creation, 2) Board oversight and capabilities, 3) AI risk and controls, 4) Governance and ethical AI practices, and 5) Workforce impact. We then benchmarked the responses against Microsoft's AI Maturity Spectrum, a constructive framework for assessing organisational AI readiness, to produce a comparative view of where ASX boards stand today.

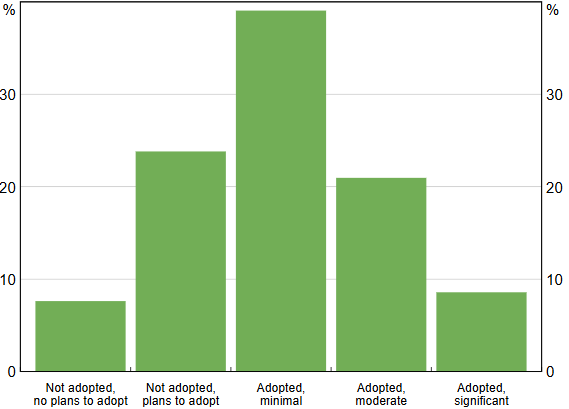

The benchmark: Where surveyed ASX boards stand today

Fidelity's ASX AI maturity benchmark assessment results

Based on Microsoft's Enterprise AI maturity framework. Surveyed companies only.

.png)

Source: Fidelity International, Microsoft’s Enterprise AI maturity in five steps: Our guide for IT leaders - Inside Track Blog

This benchmarking exercise produced a distribution that, while encouraging in parts, underscores the significant work that remains, particularly at the governance and accountability layer.

Key findings at a glance

- Uneven maturity across the ASX: A small cohort of companies is demonstrating genuine progress, with AI embedded into strategy and supported by developing governance frameworks. However, a larger group remains at an early or transitional stage, with board oversight struggling to keep pace with the speed of AI deployment.

- AI governance is becoming investment relevant: Although AI governance may not be an obvious lens for investment analysis, we believe it is becoming increasingly material. As AI adoption accelerates, governance gaps can translate into operational, regulatory, reputational and workforce risks faster than many boards anticipate.

- Board capability is strengthening: Many boards are actively building the literacy, oversight structures and accountability needed for effective governance. Boards are prioritising director education and responsible‑use policies, though translation into decision‑making, measurable outcomes and assurance remains uneven.

- AI is increasingly strategic, with value creation concentrated in efficiency gains: Boards now recognise AI as a core strategic enabler, though near‑term benefits remain heavily skewed toward cost and productivity. Around 70% of surveyed boards still frame AI primarily as an efficiency lever, with a smaller cohort emphasising product innovation or customer‑led growth.

- AI amplifies existing risks: AI increases the speed, reach and impact of errors, meaning small incidents can escalate quickly and attract outsized scrutiny. Organisations are beginning to integrate AI into enterprise risk frameworks with human‑in‑the‑loop controls, but gaps in mitigation are emerging as systems scale faster than oversight.

- Ongoing engagement is critical: The divergence in AI maturity across the ASX carries real implications for long term value creation and risk. We view AI governance as an emerging stewardship priority and intend to continue engaging with boards to encourage stronger oversight, clearer accountability and more resilient governance frameworks.

Introduction: A governance inflection point

The emergence of large language models at scale and the mainstream adoption of generative AI tools in the last three years has fundamentally changed the nature and urgency of the AI conversation in boardrooms. What had for years been a topic confined to technology committees and R&D roadmaps has, in a compressed period of time, become a first-order strategic and governance issue for boards of all sizes and sectors.

AI adoption stage by Australian firms

Proportion of respondents

Source: RBA, November 2025

As a long-term, active steward of capital across the ASX, Fidelity International believes that how a board governs AI will be a meaningful differentiator of long-term value creation. Against that backdrop, we took the 2025 AGM season as an opportunity to go beyond the standard AI disclosure review and engage directly and systematically with boards.

Red flags: Where governance is falling short

Across the cohort, several patterns of concern emerged consistently enough to warrant direct attention. We flag these not to alarm, but because we believe transparency about risk is a precondition of progress. These areas will be a focus of our ongoing board engagement:

- CEO–Board disconnect on AI narrative. Contradictory answers from the Chair and CEO on AI strategic prioritisation and customer‑facing AI are concerning. Mixed messaging elevates material disclosure risk and could point to weak oversight of AI decisions and gaps in escalation protocols and board information quality.

- Bloated AI pipelines without prioritisation. Claims of “100+ use cases” or a “300‑page deck” are another concern as it highlights lack of prioritisation and potentially an immature approach to adoption. Large pipelines are a concern as they could lead to organisational distraction and inappropriate investment decisions.

- Minimal articulation of “no‑go” criteria. While AI impact assessments are still maturing, several high‑risk areas, such as workforce surveillance and biased customer treatment, are already well recognised. It was therefore a key gap that few boards had defined “no‑go” zones for AI use. Beyond customer data privacy, most boards lacked clear responses on where AI should not be deployed, signalling a material oversight area that requires further work.

- Workforce disruption downplayed. Over 40% report that they expect minimal impact in the next 12–24 months or claim it’s too early, we believe this in part reflects reputational sensitivities after recent backlash to AI‑linked redundancies. While we agree it may be too early for boards in some sectors to take a view, we believe it is important for companies to already be thinking about workforce transformation, training and support systems.

|

While these red flags may appear isolated, we are conscious that AI adoption amplifies existing risks. AI accelerates the scale, speed and visibility of failures, and public tolerance for AI errors is markedly lower than for human ones. |

Bright spots: Interesting actions companies are taking

Red flags are real, but they do not define the whole picture. Across our 31-company survey, a number of companies are approaching AI governance with a seriousness and creativity that stands out, and in some cases, offers a practical template for peers. These are not necessarily the largest companies in our universe, nor the most AI-mature in terms of deployment. They are the companies where governance ambition is matching the pace of adoption. Below we share some examples of the leading actions companies are taking:

- Hiring as an execution signal. 45% of companies surveyed added AI talent over the last 12–24 months, from single specialists to material team builds. Boards tying hiring to roadmaps, budgets and workforce training are signalling execution and long-term commitment.

- AI incentives in remuneration and capability. Surprisingly some companies are already incorporating AI incentives into their remuneration. Companies are pairing upskilling programs with KPIs. Some are pushing “AI native” builds, while others are embedding usage targets in STI/LTI. Done well, incentives could align organisational goals and emphasise adoption quality, safety, productivity and impact.

- Digital leaders have an AI edge. Responses consistently reinforced that legacy investment in digitalisation, data quality and cloud migration were the primary determinants of current AI readiness. Robust data governance and quality are prerequisites.

|

The most important observation is that none of the leading practices we identified require an AI-native business. Instead, they require deliberate board leadership and the discipline to build governance frameworks before they are demanded by regulators. |

Emerging risks: What we are watching

Beyond the governance gaps identified through our survey, this engagement exercise has brought into focus a set of forward-looking risks that are not yet fully visible in board conversations but that we believe may become tangible in the near-term. These are risks that sit at the intersection of AI's technical trajectory, the evolving regulatory environment, and the competitive dynamics of the Australian market. We are monitoring these actively as part of our investment and stewardship research, and we expect to incorporate them into our engagement agenda in the 2026 AGM season.

- ERM integration dominates. According to AI risk experts, companies can adopt one of two risk models: 1) embed AI within the existing enterprise risk management framework (ERM) or 2) set-up a separate AI risk framework. All boards surveyed above Stage 1 (Awareness), indicated that AI is being embedded within existing ERM frameworks, and that the boards are adopting a cyber led approach. While we currently view this approach as appropriate, we will continue to monitor this area, as integration can under recognise AI specific scaling and accountability risks.

- Shadow AI is a key blind spot. Unauthorised employee use of public tools creates data leakage, IP loss and compliance risk. Not a single board raised it as a core risk area, despite evidence that 69% of organisations suspect prohibited use and 78% of users bring their own tools.

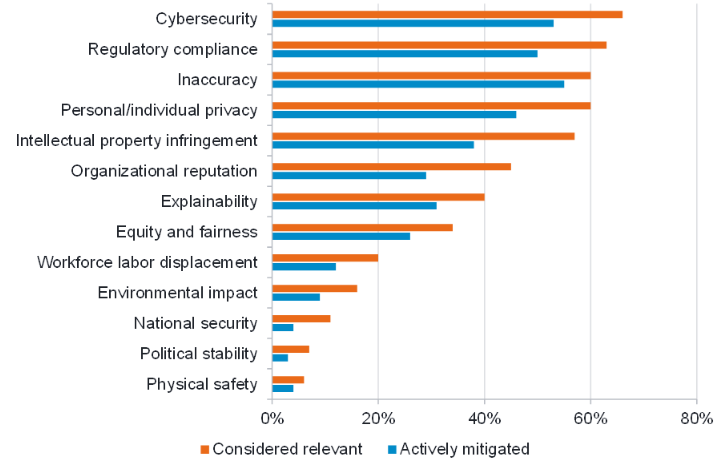

- IP protection shapes AI posture. IP intensive businesses appear to be adopting cautious, controlled deployment to protect core assets. While IP protection is central to value capture, AI conservatism could slow innovation. McKinsey’s AI risk survey highlights this as a key risk that isn’t being actively mitigated. We agree and plan to do more work in this area.

A clear gap exists between recognised AI risks and mitigating them, most notably for IP infringement

AI risks: Considered relevant vs actively mitigated (2024)

Source: McKinsey & Company Survey, 2024

4. “Human in the loop” is insufficient alone. The main risk-mitigation argument that boards outlined was that AI is currently not being deployed as an autonomous decision-maker and instead that it serves as a decision-support overlay with explicit human intervention. While constructive, “human-in-loop” mechanisms are increasingly being identified as an insufficient risk management tool, and companies will need to develop improved second and third-line risk mitigation mechanisms.

|

While these red flags may appear isolated, we are conscious that AI adoption amplifies existing risks. AI accelerates the scale, speed and visibility of failures, and public tolerance for AI errors is markedly lower than for human ones. |

Methodology: What we asked and how we benchmarked companies

Our survey was designed to probe the specifics of how AI is being governed at the board level. We engaged 31 ASX-listed companies across the financials, resources, healthcare, technology, consumer, gaming, real estate, media, industrials, and infrastructure sectors, conducting structured interviews and written submissions tied to the 2025 AGM engagement cycle.

Questions were framed to elicit both qualitative insight and assessable evidence. The five thematic areas we covered, and the nature of the questions within each, are summarised below.

| Five AI domains | Key focus areas |

|---|---|

| 1. Strategy and value creation | AI use cases, capital allocation to AI, ROI articulation and competitive positioning |

| 2. Board oversight & skills | Frequency of Board-level AI discussion, director capability and training as well as accountability structures |

| 3. Risk & controls | AI risk identification and taxonomy, approach to AI risk integration, data quality and infrastructure approach |

| 4. Governance & ethical AI | Policies, acceptable use frameworks, data ethics, and “no-go” criteria |

| 5. Workforce impact | Near-term workforce implications, reskilling strategies, role displacement considerations |

The benchmark: Where ASX boards stand today

Responses were assessed and scored by our Sustainable Investment team, with each company mapped to a stage on Microsoft's AI Maturity Spectrum which is a five-stage framework that provides a robust basis for benchmarking organisational AI readiness.

The table below summarises the five stages of the framework, their defining characteristics, and the percentage of companies in our sample that we assessed as operating at each level.

| Stage | Maturity level | Characteristics | % of surveyed companies |

|---|---|---|---|

| Stage 0 | Too early | AI is not yet an active enterprise priority beyond awareness | 13% |

| Stage 1 | Aware & foundation | Ad hoc experimentation; no formal AI strategy or board-level mandate. Core data, technology and guardrails are being put in place | 26% |

| Stage 2 | Active pilots & skill building | Initial AI use cases identified; some governance frameworks emerging. Use-cases are being trialled in pockets with early lessons on value and risk | 9% |

| Stage 3 | Operationalise & govern | Formal AI strategy in place; selected use-cases are in production with defined ownership, controls and performance tracking | 13% |

| Stage 4 | Enterprise-wide adoption | AI embedded across key functions; mature governance and risk controls | 16% |

| Stage 5 | Transformational & monetisation | AI is a core strategic differentiator; comprehensive governance, ethics frameworks, and workforce transition plans in place | 23% |

Note: Stage allocations reflect our Sustainable Investment team's assessment of each company's responses across all five survey dimensions and does not include other publicly disclosed information. Companies are not identified. The distribution is based solely on the 31-company survey universe and is not intended to be representative of the broader ASX.

Conclusion: Our ongoing engagement lens

The picture that emerges from our 2025 AGM season engagement is one of a market in genuine transition. Ambition around AI is real, and early momentum is evident. However, the governance infrastructure required to sustain and protect that ambition remains a work in progress. This is not a criticism of intent. The pace of change in AI development is genuinely exceptional, and boards are navigating this terrain without the benefit of established playbooks or settled regulatory frameworks.

What we are asking of boards is deliberateness and clarity: clarity on where AI is being deployed and why; accountability structures that regularly bring AI-related risk and strategy into the boards’ line of sight; and governance frameworks that are proportionate to the scale of deployment and designed to grow alongside it. We also see a clear need for a genuine commitment to the workforce dimension, because how companies manage the human impact of AI will matter to employees, regulators, and communities in ways that will ultimately matter to investors.

Fidelity International will continue to engage actively with boards on these issues through our annual stewardship program. We will revisit these themes in 2026, using the benchmark established here as a baseline for assessing progress.